Kate Critiques Apple’s Deep Fusion in an Ad-Hoc Manner

I am prepared to offer a critique of the iPhone 11 Pro camera, specifically the Deep Fusion machine learning piece to add in detail.

While it’s an impressive piece of engineering, it doesn’t always lead to great photos. Sometimes, it imparts so much computed texture to minuscule details that the image feels unnaturally sharp—a sort of photographic equivalent of the “uncanny valley.” It doesn’t feel beautiful to my eye in a lot of instances, instances that usually involve textures like fur or bricks on distant buildings.

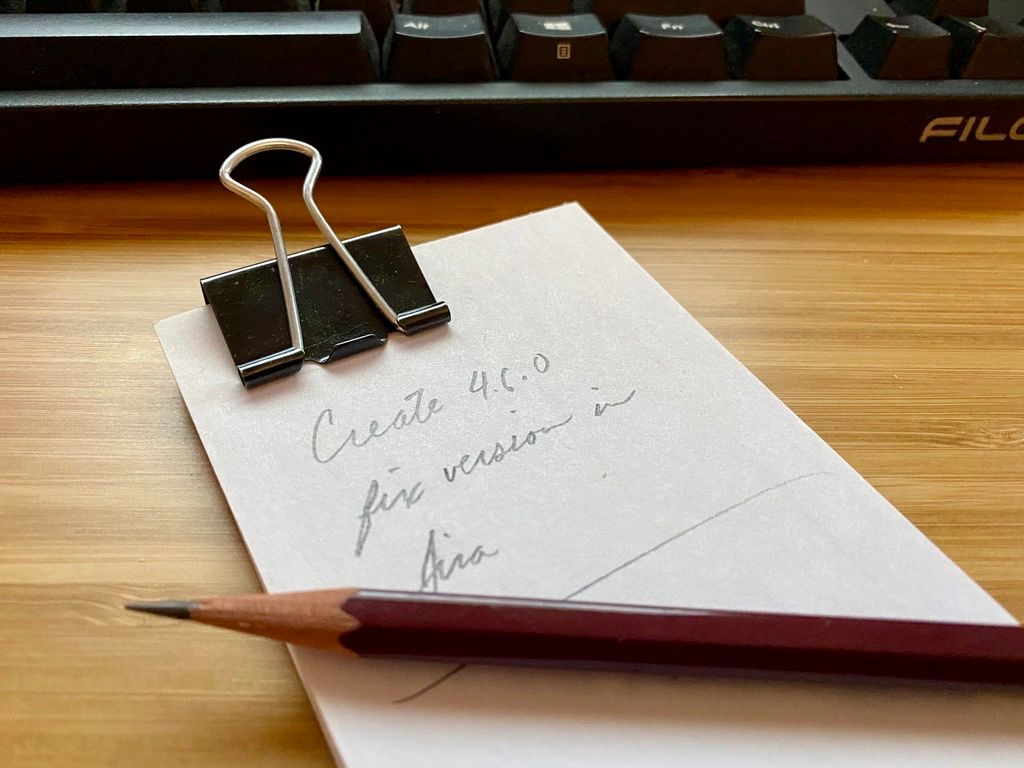

Take this photo of an index card as an example…

This image is a great example of the machine-learning part of Apple’s camera that I think still need to be tweaked. Look at the shiny light on that index card. The camera is capturing details and emphasizing them. That’s great if you’re photographing an intricate tapestry, but not ideal on a snapshot of an index card.

Let’s look at a less egregious example that’s only a little distracting.

Look at that fur under Gambit’s chin. The ML algorithm was like, “Let me lovingly render each tuft of fur here. Texture is important.” This one is more arguable. Maybe it’s better? Maybe it’s not? It’s a lot more subjective, but I still don’t care for it.

Compare this with a photo taken with my Canon EOS RP mirrorless camera with a 50 mm f/1.8 lens.

You can still see the pattern of his under-chin fur tufts here, but it isn’t emphasized by machine-learning algorithms.

Now, it’s possible that, much like the HDR look (skies and subjects all exposed correctly by taking a series of images that are layered together to produce a single perfectly exposed image) that we all have adjusted to so much that traditional photos in harsh lighting conditions can appear strange or wrong, we might have to have time to adjust to this look. I don’t want to be too much of a curmudgeon here. I just feel that, as an artistic tool, the iPhone 11 Pro camera isn’t helping me create the images that my mind’s eye sees when I’m composing. This is mostly to do with me being more accustomed to cameras with no or less machine learning happening. Specifically, items that I might intend to fade into the “background” won’t necessarily do so, and I need to alter my compositions with the iPhone Pro to compensate.